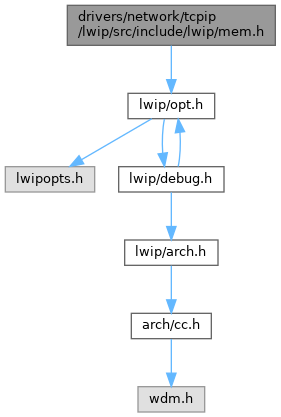

#include "lwip/opt.h"

Go to the source code of this file.

Macros | |

| #define | MEM_SIZE_F U16_F |

Typedefs | |

| typedef u16_t | mem_size_t |

Functions | |

| void | mem_init (void) |

| void * | mem_trim (void *mem, mem_size_t size) |

| void * | mem_malloc (mem_size_t size) |

| void * | mem_calloc (mem_size_t count, mem_size_t size) |

| void | mem_free (void *mem) |

Detailed Description

Heap API

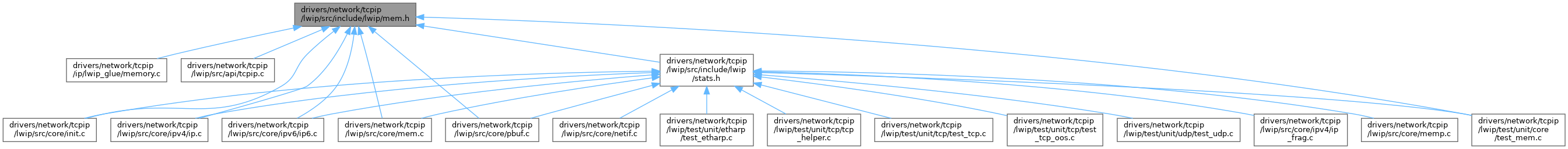

Definition in file mem.h.

Macro Definition Documentation

◆ MEM_SIZE_F

Typedef Documentation

◆ mem_size_t

| typedef u16_t mem_size_t |

Function Documentation

◆ mem_calloc()

| void * mem_calloc | ( | mem_size_t | count, |

| mem_size_t | size | ||

| ) |

Contiguously allocates enough space for count objects that are size bytes of memory each and returns a pointer to the allocated memory.

The allocated memory is filled with bytes of value zero.

- Parameters

-

count number of objects to allocate size size of the objects to allocate

- Returns

- pointer to allocated memory / NULL pointer if there is an error

Definition at line 999 of file mem.c.

Referenced by bridgeif_fdb_init().

◆ mem_free()

Put a struct mem back on the heap

- Parameters

-

rmem is the data portion of a struct mem as returned by a previous call to mem_malloc()

Definition at line 617 of file mem.c.

◆ mem_init()

Zero the heap and initialize start, end and lowest-free

Definition at line 516 of file mem.c.

Referenced by lwip_init().

◆ mem_malloc()

| void * mem_malloc | ( | mem_size_t | size_in | ) |

Allocate a block of memory with a minimum of 'size' bytes.

- Parameters

-

size_in is the minimum size of the requested block in bytes.

- Returns

- pointer to allocated memory or NULL if no free memory was found.

Note that the returned value will always be aligned (as defined by MEM_ALIGNMENT).

Definition at line 831 of file mem.c.

Referenced by do_memp_malloc_pool(), malloc_keep_x(), mem_calloc(), pbuf_alloc(), slipif_init(), and START_TEST().

◆ mem_trim()

| void * mem_trim | ( | void * | rmem, |

| mem_size_t | new_size | ||

| ) |

Shrink memory returned by mem_malloc().

- Parameters

-

rmem pointer to memory allocated by mem_malloc the is to be shrunk new_size required size after shrinking (needs to be smaller than or equal to the previous size)

- Returns

- for compatibility reasons: is always == rmem, at the moment or NULL if newsize is > old size, in which case rmem is NOT touched or freed!

Definition at line 699 of file mem.c.

Referenced by pbuf_realloc(), and START_TEST().