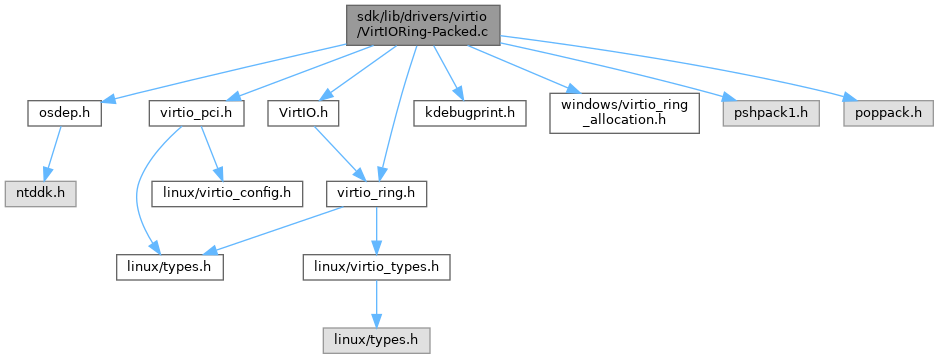

#include "osdep.h"

#include "virtio_pci.h"

#include "VirtIO.h"

#include "kdebugprint.h"

#include "virtio_ring.h"

#include "windows/virtio_ring_allocation.h"

#include <pshpack1.h>

#include <poppack.h>

Go to the source code of this file.

|

| static bool | vring_need_event (__u16 event_idx, __u16 new_idx, __u16 old) |

| |

| unsigned int | vring_control_block_size_packed (u16 qsize) |

| |

| unsigned long | vring_size_packed (unsigned int num, unsigned long align) |

| |

| static int | virtqueue_add_buf_packed (struct virtqueue *_vq, struct scatterlist sg[], unsigned int out, unsigned int in, void *opaque, void *va_indirect, ULONGLONG phys_indirect) |

| |

| static void | detach_buf_packed (struct virtqueue_packed *vq, unsigned int id) |

| |

| static void * | virtqueue_detach_unused_buf_packed (struct virtqueue *_vq) |

| |

| static void | virtqueue_disable_cb_packed (struct virtqueue *_vq) |

| |

| static bool | is_used_desc_packed (const struct virtqueue_packed *vq, u16 idx, bool used_wrap_counter) |

| |

| static bool | virtqueue_poll_packed (struct virtqueue_packed *vq, u16 off_wrap) |

| |

| static unsigned | virtqueue_enable_cb_prepare_packed (struct virtqueue_packed *vq) |

| |

| static bool | virtqueue_enable_cb_packed (struct virtqueue *_vq) |

| |

| static bool | virtqueue_enable_cb_delayed_packed (struct virtqueue *_vq) |

| |

| static BOOLEAN | virtqueue_is_interrupt_enabled_packed (struct virtqueue *_vq) |

| |

| static void | virtqueue_shutdown_packed (struct virtqueue *_vq) |

| |

| static bool | more_used_packed (const struct virtqueue_packed *vq) |

| |

| static void * | virtqueue_get_buf_packed (struct virtqueue *_vq, unsigned int *len) |

| |

| static BOOLEAN | virtqueue_has_buf_packed (struct virtqueue *_vq) |

| |

| static bool | virtqueue_kick_prepare_packed (struct virtqueue *_vq) |

| |

| static void | virtqueue_kick_always_packed (struct virtqueue *_vq) |

| |

| struct virtqueue * | vring_new_virtqueue_packed (unsigned int index, unsigned int num, unsigned int vring_align, VirtIODevice *vdev, void *pages, void(*notify)(struct virtqueue *), void *control) |

| |

◆ BAD_RING

◆ BUG_ON

◆ packedvq

◆ VRING_DESC_F_INDIRECT

| #define VRING_DESC_F_INDIRECT 4 |

◆ VRING_DESC_F_NEXT

◆ VRING_DESC_F_WRITE

◆ VRING_PACKED_DESC_F_AVAIL

| #define VRING_PACKED_DESC_F_AVAIL 7 |

◆ VRING_PACKED_DESC_F_USED

| #define VRING_PACKED_DESC_F_USED 15 |

◆ VRING_PACKED_EVENT_F_WRAP_CTR

| #define VRING_PACKED_EVENT_F_WRAP_CTR 15 |

◆ VRING_PACKED_EVENT_FLAG_DESC

| #define VRING_PACKED_EVENT_FLAG_DESC 0x2 |

◆ VRING_PACKED_EVENT_FLAG_DISABLE

| #define VRING_PACKED_EVENT_FLAG_DISABLE 0x1 |

◆ VRING_PACKED_EVENT_FLAG_ENABLE

| #define VRING_PACKED_EVENT_FLAG_ENABLE 0x0 |

◆ detach_buf_packed()

◆ is_used_desc_packed()

◆ more_used_packed()

◆ virtqueue_add_buf_packed()

Definition at line 171 of file VirtIORing-Packed.c.

179{

181 unsigned int descs_used;

184

185 descs_used =

out +

in;

188

191

192 if (va_indirect && vq->

num_free > 0) {

194 for (

i = 0;

i < descs_used;

i++) {

196 desc[

i].addr = sg[

i].physAddr.QuadPart;

197 desc[

i].len = sg[

i].length;

198 }

199 vq->

packed.vring.desc[

head].addr = phys_indirect;

202

205

206 DPrintf(5,

"Added buffer head %i to Q%d\n",

head, vq->

vq.index);

210 vq->

packed.avail_wrap_counter ^= 1;

214 }

216

219

221

222

224 vq->

packed.desc_state[

id].data = opaque;

226

227 } else {

229 u16 curr, prev, head_flags;

231 DPrintf(6,

"Can't add buffer to Q%d\n", vq->

vq.index);

233 }

237 for (

n = 0;

n < descs_used;

n++) {

240 if (

n != descs_used - 1) {

242 }

243 desc[

i].addr = sg[

n].physAddr.QuadPart;

244 desc[

i].len = sg[

n].length;

248 }

249 else {

251 }

252

253 prev = curr;

254 curr = vq->

packed.desc_state[curr].next;

255

256 if (++

i >= vq->

packed.vring.num) {

261 }

262 }

263

265 vq->

packed.avail_wrap_counter ^= 1;

266

267

269

270

273

274

276 vq->

packed.desc_state[

id].data = opaque;

277 vq->

packed.desc_state[

id].last = prev;

278

279

280

281

282

283

285 vq->

packed.vring.desc[

head].flags = head_flags;

287

288 DPrintf(5,

"Added buffer head @%i+%d to Q%d\n",

head, descs_used, vq->

vq.index);

289 }

290

291 return 0;

292}

#define VRING_DESC_F_WRITE

#define BUG_ON(condition)

#define VRING_DESC_F_NEXT

#define VRING_DESC_F_INDIRECT

struct outqueuenode * head

#define DPrintf(Level, Fmt)

GLsizei GLenum const GLvoid GLsizei GLenum GLbyte GLbyte GLbyte GLdouble GLdouble GLdouble GLfloat GLfloat GLfloat GLint GLint GLint GLshort GLshort GLshort GLubyte GLubyte GLubyte GLuint GLuint GLuint GLushort GLushort GLushort GLbyte GLbyte GLbyte GLbyte GLdouble GLdouble GLdouble GLdouble GLfloat GLfloat GLfloat GLfloat GLint GLint GLint GLint GLshort GLshort GLshort GLshort GLubyte GLubyte GLubyte GLubyte GLuint GLuint GLuint GLuint GLushort GLushort GLushort GLushort GLboolean const GLdouble const GLfloat const GLint const GLshort const GLbyte const GLdouble const GLfloat const GLint const GLshort const GLdouble const GLfloat const GLint const GLshort const GLdouble const GLfloat const GLint const GLshort const GLdouble const GLfloat const GLint const GLshort const GLdouble const GLdouble const GLfloat const GLfloat const GLint const GLint const GLshort const GLshort const GLdouble const GLfloat const GLint const GLshort const GLdouble const GLfloat const GLint const GLshort const GLdouble const GLfloat const GLint const GLshort const GLdouble const GLfloat const GLint const GLshort const GLdouble const GLfloat const GLint const GLshort const GLdouble const GLfloat const GLint const GLshort const GLdouble const GLfloat const GLint const GLshort GLenum GLenum GLenum GLfloat GLenum GLint GLenum GLenum GLenum GLfloat GLenum GLenum GLint GLenum GLfloat GLenum GLint GLint GLushort GLenum GLenum GLfloat GLenum GLenum GLint GLfloat const GLubyte GLenum GLenum GLenum const GLfloat GLenum GLenum const GLint GLenum GLint GLint GLsizei GLsizei GLint GLenum GLenum const GLvoid GLenum GLenum const GLfloat GLenum GLenum const GLint GLenum GLenum const GLdouble GLenum GLenum const GLfloat GLenum GLenum const GLint GLsizei GLuint GLfloat GLuint GLbitfield GLfloat GLint GLuint GLboolean GLenum GLfloat GLenum GLbitfield GLenum GLfloat GLfloat GLint GLint const GLfloat GLenum GLfloat GLfloat GLint GLint GLfloat GLfloat GLint GLint const GLfloat GLint GLfloat GLfloat GLint GLfloat GLfloat GLint GLfloat GLfloat const GLdouble const GLfloat const GLdouble const GLfloat GLint i

D3D11_SHADER_VARIABLE_DESC desc

FORCEINLINE VOID KeMemoryBarrier(VOID)

wchar_t tm const _CrtWcstime_Writes_and_advances_ptr_ count wchar_t ** out

Referenced by vring_new_virtqueue_packed().

◆ virtqueue_detach_unused_buf_packed()

Definition at line 306 of file VirtIORing-Packed.c.

307{

311

312 for (

i = 0;

i <

vq->packed.vring.num;

i++) {

313 if (!

vq->packed.desc_state[

i].data)

314 continue;

315

316 buf =

vq->packed.desc_state[

i].data;

319 }

320

322

324}

static void detach_buf_packed(struct virtqueue_packed *vq, unsigned int id)

GLenum GLuint GLenum GLsizei const GLchar * buf

Referenced by vring_new_virtqueue_packed().

◆ virtqueue_disable_cb_packed()

◆ virtqueue_enable_cb_delayed_packed()

Definition at line 401 of file VirtIORing-Packed.c.

402{

404 bool event_suppression_enabled =

vq->vq.vdev->event_suppression_enabled;

405 u16 used_idx, wrap_counter;

407

408

409

410

411

412

413 if (event_suppression_enabled) {

414

415 bufs = (

vq->packed.vring.num -

vq->num_free) * 3 / 4;

416 wrap_counter =

vq->packed.used_wrap_counter;

417

418 used_idx =

vq->last_used_idx +

bufs;

419 if (used_idx >=

vq->packed.vring.num) {

420 used_idx -= (

u16)

vq->packed.vring.num;

421 wrap_counter ^= 1;

422 }

423

424 vq->packed.vring.driver->off_wrap = used_idx |

426

427

428

429

430

432 }

433

435 vq->packed.event_flags_shadow = event_suppression_enabled ?

438 vq->packed.vring.driver->flags =

vq->packed.event_flags_shadow;

439 }

440

441

442

443

444

446

449 vq->packed.used_wrap_counter)) {

450 return false;

451 }

452

453 return true;

454}

#define VRING_PACKED_EVENT_FLAG_DESC

#define VRING_PACKED_EVENT_F_WRAP_CTR

#define VRING_PACKED_EVENT_FLAG_ENABLE

Referenced by vring_new_virtqueue_packed().

◆ virtqueue_enable_cb_packed()

Definition at line 393 of file VirtIORing-Packed.c.

394{

397

399}

static unsigned virtqueue_enable_cb_prepare_packed(struct virtqueue_packed *vq)

static bool virtqueue_poll_packed(struct virtqueue_packed *vq, u16 off_wrap)

Referenced by vring_new_virtqueue_packed().

◆ virtqueue_enable_cb_prepare_packed()

Definition at line 362 of file VirtIORing-Packed.c.

363{

364 bool event_suppression_enabled = vq->

vq.vdev->event_suppression_enabled;

365

366

367

368

369

370 if (event_suppression_enabled) {

371 vq->

packed.vring.driver->off_wrap =

373 (vq->

packed.used_wrap_counter <<

375

376

377

378

380 }

381

383 vq->

packed.event_flags_shadow = event_suppression_enabled ?

386 vq->

packed.vring.driver->flags = vq->

packed.event_flags_shadow;

387 }

388

391}

Referenced by virtqueue_enable_cb_packed().

◆ virtqueue_get_buf_packed()

Definition at line 486 of file VirtIORing-Packed.c.

489{

493

497 }

498

499

501

502 last_used =

vq->last_used_idx;

503 id =

vq->packed.vring.desc[last_used].id;

504 *

len =

vq->packed.vring.desc[last_used].len;

505

506 if (

id >=

vq->packed.vring.num) {

509 }

510 if (!

vq->packed.desc_state[

id].data) {

513 }

514

515

516 ret =

vq->packed.desc_state[

id].data;

518

519 vq->last_used_idx +=

vq->packed.desc_state[

id].num;

520 if (

vq->last_used_idx >=

vq->packed.vring.num) {

521 vq->last_used_idx -= (

u16)

vq->packed.vring.num;

522 vq->packed.used_wrap_counter ^= 1;

523 }

524

525

526

527

528

529

531 vq->packed.vring.driver->off_wrap =

vq->last_used_idx |

532 ((

u16)

vq->packed.used_wrap_counter <<

535 }

536

538}

static bool more_used_packed(const struct virtqueue_packed *vq)

#define BAD_RING(vq, fmt,...)

Referenced by vring_new_virtqueue_packed().

◆ virtqueue_has_buf_packed()

◆ virtqueue_is_interrupt_enabled_packed()

◆ virtqueue_kick_always_packed()

◆ virtqueue_kick_prepare_packed()

Definition at line 546 of file VirtIORing-Packed.c.

547{

549 u16 new, old, off_wrap,

flags, wrap_counter, event_idx;

550 bool needs_kick;

551 union {

552 struct {

555 };

558

559

560

561

562

564

566 new = vq->

packed.next_avail_idx;

568

571

575 }

576

578

581 if (wrap_counter != vq->

packed.avail_wrap_counter)

583

586 return needs_kick;

587}

static bool vring_need_event(__u16 event_idx, __u16 new_idx, __u16 old)

Referenced by vring_new_virtqueue_packed().

◆ virtqueue_poll_packed()

◆ virtqueue_shutdown_packed()

Definition at line 462 of file VirtIORing-Packed.c.

463{

465 unsigned int num =

vq->packed.vring.num;

466 void *pages =

vq->packed.vring.desc;

468

473 vring_align,

475 pages,

477 _vq);

478}

struct virtqueue * vring_new_virtqueue_packed(unsigned int index, unsigned int num, unsigned int vring_align, VirtIODevice *vdev, void *pages, void(*notify)(struct virtqueue *), void *control)

unsigned long vring_size_packed(unsigned int num, unsigned long align)

void(* notification_cb)(struct virtqueue *vq)

#define RtlZeroMemory(Destination, Length)

Referenced by vring_new_virtqueue_packed().

◆ vring_control_block_size_packed()

◆ vring_need_event()

◆ vring_new_virtqueue_packed()

Definition at line 598 of file VirtIORing-Packed.c.

606{

609

613

616

617

618 vq->packed.vring.num =

num;

619 vq->packed.vring.desc = pages;

620 vq->packed.vring.driver =

vq->vq.avail_va;

621 vq->packed.vring.device =

vq->vq.used_va;

622

626 vq->packed.avail_wrap_counter = 1;

627 vq->packed.used_wrap_counter = 1;

628 vq->last_used_idx = 0;

630 vq->packed.next_avail_idx = 0;

631 vq->packed.event_flags_shadow = 0;

632 vq->packed.desc_state =

vq->desc_states;

633

635 for (

i = 0;

i <

num - 1;

i++) {

636 vq->packed.desc_state[

i].next =

i + 1;

637 }

638

651}

static void virtqueue_kick_always_packed(struct virtqueue *_vq)

static bool virtqueue_enable_cb_delayed_packed(struct virtqueue *_vq)

static bool virtqueue_kick_prepare_packed(struct virtqueue *_vq)

static BOOLEAN virtqueue_is_interrupt_enabled_packed(struct virtqueue *_vq)

static void * virtqueue_get_buf_packed(struct virtqueue *_vq, unsigned int *len)

static int virtqueue_add_buf_packed(struct virtqueue *_vq, struct scatterlist sg[], unsigned int out, unsigned int in, void *opaque, void *va_indirect, ULONGLONG phys_indirect)

static BOOLEAN virtqueue_has_buf_packed(struct virtqueue *_vq)

static bool virtqueue_enable_cb_packed(struct virtqueue *_vq)

static void * virtqueue_detach_unused_buf_packed(struct virtqueue *_vq)

static void virtqueue_disable_cb_packed(struct virtqueue *_vq)

static void virtqueue_shutdown_packed(struct virtqueue *_vq)

Referenced by vio_modern_setup_vq(), and virtqueue_shutdown_packed().

◆ vring_size_packed()